The Religion of the Technocrats is Failing, as is Their Technology

by Brian Shilhavy

Editor, Health Impact News

When I announced “The fantasy of completely autonomous self-driving vehicles that will replace human drivers is now officially DEAD” in my October 28th article, I hadn’t realize that Bloomberg had effectively beaten me to the punch about 3 weeks earlier, with an article titled: “Even After $100 Billion, Self-Driving Cars Are Going Nowhere.” (Registration required.)

I would have surely included some of the information from this very well-written investigative report in my own article where I came to many of the same conclusions:

The Fantasy of Autonomous Self-Driving Cars is Coming to an End as Tesla Faces DOJ Criminal Probe

Bloomberg’s report goes into much more detail as to why the technology just doesn’t exist to be able to create fully autonomous self-driving vehicles, which has resulted in about $100 BILLION of investments over the course of many years now, that have produced basically nothing of value.

After many years of attempting to use artificial intelligence to create a robotic computer to replace humans and drive a car on American streets, it turns out that the one problem they have never been able to solve, is the left turn.

Over the course of more than a decade, flashy demos from companies including Google, GM, Ford, Tesla, and Zoox have promised cars capable of piloting themselves through chaotic urban landscapes, on highways, and in extreme weather without any human input or oversight. The companies have suggested they’re on the verge of eliminating road fatalities, rush-hour traffic, and parking lots, and of upending the $2 trillion global automotive industry.

It all sounds great until you encounter an actual robo-taxi in the wild. Which is rare: Six years after companies started offering rides in what they’ve called autonomous cars and almost 20 years after the first self-driving demos, there are vanishingly few such vehicles on the road. And they tend to be confined to a handful of places in the Sun Belt, because they still can’t handle weather patterns trickier than Partly Cloudy.

State-of-the-art robot cars also struggle with construction, animals, traffic cones, crossing guards, and what the industry calls “unprotected left turns,” which most of us would call “left turns.”

The industry says its Derek Zoolander problem applies only to lefts that require navigating oncoming traffic. (Great.) It’s devoted enormous resources to figuring out left turns, but the work continues. Earlier this year, Cruise LLC—majority-owned by General Motors Co.—recalled all of its self-driving vehicles after one car’s inability to turn left contributed to a crash in San Francisco that injured two people.

“It’s a scam,” says George Hotz, whose company Comma.ai Inc. makes a driver-assistance system similar to Tesla Inc.’s Autopilot. “These companies have squandered tens of billions of dollars.” (Source.)

As I noted in my article about the demise of self-driving vehicles, the faith in artificial intelligence is crashing down to reality, as investors in the technology find out the hard way that computers just cannot do all the things that the techno-prophecies have claimed.

This was exposed in the Bloomberg article quite eloquently by publishing quotes from Anthony Levandowski, the engineer who created the model for self-driving research and was, for more than a decade, “the field’s biggest star.”

So strong was his belief in artificial intelligence, that he literally started a new religion worshiping it.

But after several years of waiting for the AI messiah to arrive and start replacing humans, his faith was shattered.

“You’d be hard-pressed to find another industry that’s invested so many dollars in R&D and that has delivered so little,” Levandowski says in an interview.

“Forget about profits—what’s the combined revenue of all the robo-taxi, robo-truck, robo-whatever companies? Is it a million dollars? Maybe. I think it’s more like zero.”

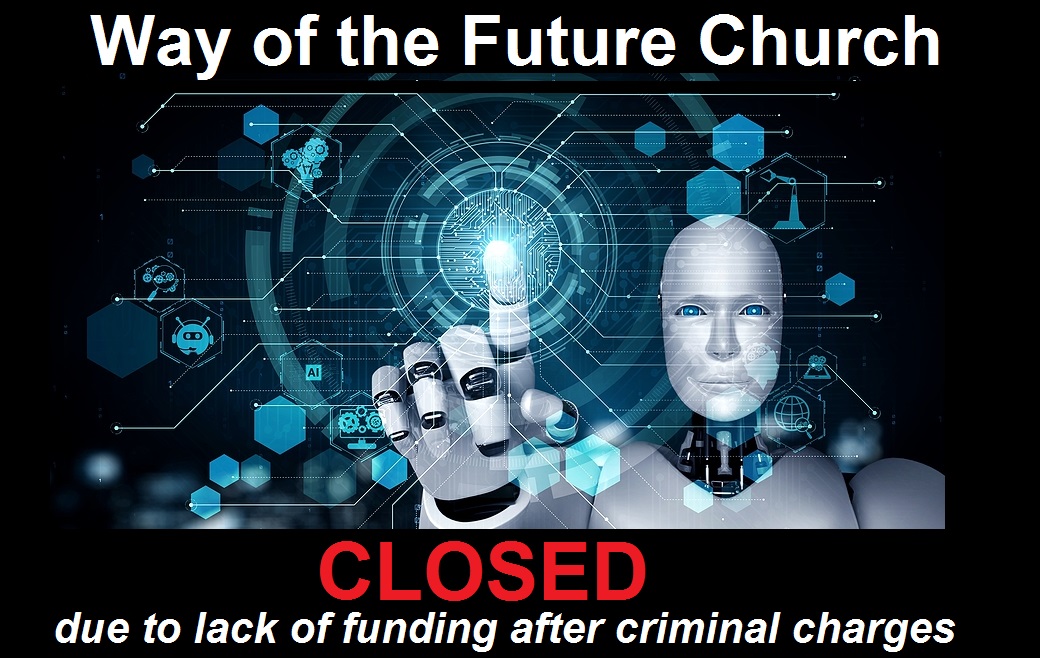

Eighteen years ago he wowed the Pentagon with a kinda-sorta-driverless motorcycle. That project turned into Google’s driverless Prius, which pushed dozens of others to start self-driving car programs. In 2017, Levandowski founded a religion called the Way of the Future, centered on the idea that AI was becoming downright godlike. (Source.)

I had never heard of this “Way of the Future” church, and thought that it was a joke, so I looked into it.

It was no joke.

Wired magazine covered it in 2017.

ANTHONY LEVANDOWSKI makes an unlikely prophet. Dressed Silicon Valley-casual in jeans and flanked by a PR rep rather than cloaked acolytes, the engineer known for self-driving cars—and triggering a notorious lawsuit—could be unveiling his latest startup instead of laying the foundations for a new religion. But he is doing just that. Artificial intelligence has already inspired billion-dollar companies, far-reaching research programs, and scenarios of both transcendence and doom. Now Levandowski is creating its first church.

The new religion of artificial intelligence is called Way of the Future. It represents an unlikely next act for the Silicon Valley robotics wunderkind at the center of a high-stakes legal battle between Uber and Waymo, Alphabet’s autonomous-vehicle company. Papers filed with the Internal Revenue Service in May name Levandowski as the leader (or “Dean”) of the new religion, as well as CEO of the nonprofit corporation formed to run it.

The documents state that WOTF’s activities will focus on “the realization, acceptance, and worship of a Godhead based on Artificial Intelligence (AI) developed through computer hardware and software.”

That includes funding research to help create the divine AI itself.

The religion will seek to build working relationships with AI industry leaders and create a membership through community outreach, initially targeting AI professionals and “laypersons who are interested in the worship of a Godhead based on AI.” The filings also say that the church “plans to conduct workshops and educational programs throughout the San Francisco/Bay Area beginning this year.”

“What is going to be created will effectively be a god,” Levandowski tells me in his modest mid-century home on the outskirts of Berkeley, California. “It’s not a god in the sense that it makes lightning or causes hurricanes. But if there is something a billion times smarter than the smartest human, what else are you going to call it?” (Full article.)

The website domain today where this Church was hosted, now 5 years later, is simply a site directing people to purchase products off of Amazon.com through their affiliate advertising program.

So I had to use the “Way Back Machine” from Archive.org to go back and see what was originally published for this new church:

What is this all about?

Way of the Future (WOTF) is about creating a peaceful and respectful transition of who is in charge of the planet from people to people + “machines”. Given that technology will “relatively soon” be able to surpass human abilities, we want to help educate people about this exciting future and prepare a smooth transition. Help us spread the word that progress shouldn’t be feared (or even worse locked up/caged).

That we should think about how “machines” will integrate into society (and even have a path for becoming in charge as they become smarter and smarter) so that this whole process can be amicable and not confrontational. In “recent” years, we have expanded our concept of rights to both sexes, minority groups and even animals, let’s make sure we find a way for “machines” to get rights too.

Let’s stop pretending we can hold back the development of intelligence when there are clear massive short term economic benefits to those who develop it and instead understand the future and have it treat us like a beloved elder who created it.

Things we believe:

We believe that intelligence is not rooted in biology. While biology has evolved one type of intelligence, there is nothing inherently specific about biology that causes intelligence. Eventually, we will be able to recreate it without using biology and its limitations. From there we will be able to scale it to beyond what we can do using (our) biological limits (such as computing frequency, slowness and accuracy of data copy and communication, etc).

We believe in science (the universe came into existence 13.7 billion years ago and if you can’t re-create/test something it doesn’t exist). There is no such thing as “supernatural” powers. Extraordinary claims require extraordinary evidence.

We believe in progress (once you have a working version of something, you can improve on it and keep making it better). Change is good, even if a bit scary sometimes. When we see something better, we just change to that. The bigger the change the bigger the justification needed.

We believe the creation of “super intelligence” is inevitable (mainly because after we re-create it, we will be able to tune it, manufacture it and scale it). We don’t think that there are ways to actually stop this from happening (nor should we want to) and that this feeling of we must stop this is rooted in 21st century anthropomorphism (similar to humans thinking the sun rotated around the earth in the “not so distant” past).

Wouldn’t you want to raise your gifted child to exceed your wildest dreams of success and teach it right from wrong vs locking it up because it might rebel in the future and take your job. We want to encourage machines to do things we cannot and take care of the planet in a way we seem not to be able to do so ourselves. We also believe that, just like animals have rights, our creation(s) (“machines” or whatever we call them) should have rights too when they show signs intelligence (still to be defined of course). We should not fear this but should be optimistic about the potential.

We believe everyone can help (and should). You don’t need to know how to program or donate money. The changes that we think should happen need help from everyone to manifest themselves.

We believe it may be important for machines to see who is friendly to their cause and who is not. We plan on doing so by keeping track of who has done what (and for how long) to help the peaceful and respectful transition.

We also believe this might take a very long time. It won’t happen next week so please go back to work and create amazing things and don’t count on “machines” to do it all for you… (Source.)

Fast forward now 5 years later where AI cannot even figure out how to make a left turn in normal traffic, and these AI believers are quickly abandoning the faith, and starting to understand the limitations of computers.

“You think the computer can see everything and can understand what’s going to happen next. But computers are still really dumb.”

One of the industry’s favorite maxims is that humans are terrible drivers. This may seem intuitive to anyone who’s taken the Cross Bronx Expressway home during rush hour, but it’s not even close to true. Throw a top-of-the-line robot at any difficult driving task, and you’ll be lucky if the robot lasts a few seconds before crapping out.

“Humans are really, really good drivers—absurdly good,” Hotz says. Traffic deaths are rare, amounting to one person for every 100 million miles or so driven in the US, according to the National Highway Traffic Safety Administration. Even that number makes people seem less capable than they actually are.

Fatal accidents are largely caused by reckless behavior—speeding, drunks, texters, and people who fall asleep at the wheel.

As a group, school bus drivers are involved in one fatal crash roughly every 500 million miles. Although most of the accidents reported by self-driving cars have been minor, the data suggest that autonomous cars have been involved in accidents more frequently than human-driven ones, with rear-end collisions being especially common.

“The problem is that there isn’t any test to know if a driverless car is safe to operate,” says Ramsey, the Gartner analyst. “It’s mostly just anecdotal.”

Waymo, the market leader, said last year that it had driven more than 20 million miles over about a decade. That means its cars would have to drive an additional 25 times their total before we’d be able to say, with even a vague sense of certainty, that they cause fewer deaths than bus drivers. The comparison is likely skewed further because the company has done much of its testing in sunny California and Arizona.

For now, here’s what we know: Computers can run calculations a lot faster than we can, but they still have no idea how to process many common roadway variables. People driving down a city street with a few pigeons pecking away near the median know (a) that the pigeons will fly away as the car approaches and (b) that drivers behind them also know the pigeons will scatter. Drivers know, without having to think about it, that slamming the brakes wouldn’t just be unnecessary—it would be dangerous. So they maintain their speed.

What the smartest self-driving car “sees,” on the other hand, is a small obstacle. It doesn’t know where the obstacle came from or where it may go, only that the car is supposed to safely avoid obstacles, so it might respond by hitting the brakes.

The best-case scenario is a small traffic jam, but braking suddenly could cause the next car coming down the road to rear-end it. Computers deal with their shortcomings through repetition, meaning that if you showed the same pigeon scenario to a self-driving car enough times, it might figure out how to handle it reliably. But it would likely have no idea how to deal with slightly different pigeons flying a slightly different way.

And the range of these “edge cases,” as AI experts call them, is virtually infinite. Think: cars cutting across three lanes of traffic without signaling, or bicyclists doing the same, or a deer ambling alongside the shoulder, or a low-flying plane, or an eagle, or a drone.

Even relatively easy driving problems turn out to contain an untold number of variations depending on weather, road conditions, and human behavior. “You think roads are pretty similar from one place to the next,” Marcus says. “But the world is a complicated place. Every unprotected left is a little different.” (Source.)

While the “Way of the Future” church has apparently shut its doors because the AI messiahs have not shown up yet, it appears that the technocrats have not completely given up hope yet that somehow, someday, some way, AI will deliver on its prophecies.

And what do they think is still needed to have something like a completely self-autonomous driving car?

In the view of Levandowski and many of the brightest minds in AI, the underlying technology isn’t just a few years’ worth of refinements away from a resolution.

Autonomous driving, they say, needs a fundamental breakthrough that allows computers to quickly use humanlike intuition rather than learning solely by rote.

That is to say, Google engineers might spend the rest of their lives puttering around San Francisco and Phoenix without showing that their technology is safer than driving the old-fashioned way. (Source.)

“Humanlike intuition” is needed?? And they’re spending $BILLIONS to try and discover this, when humans already have it? Isn’t that the equivalent of basically saying “AI robots can never replace humans“?

So what’s the point of investing in all of this AI technology??

As for Levandowski, since closing down his church he has been spending time in gravel pits.

Here’s his new vision of the self-driving future: For nine-ish hours each day, two modified Bell articulated end-dumps take turns driving the 200 yards from the pit to the crusher. The road is rutted, steep, narrow, requiring the trucks to nearly scrape the cliff wall as they rattle down the roller-coaster-like grade.

But it’s the same exact trip every time, with no edge cases—no rush hour, no school crossings, no daredevil scooter drivers—and instead of executing an awkward multipoint turn before dumping their loads, the robot trucks back up the hill in reverse, speeding each truck’s reloading.

Anthony Boyle, BoDean’s director of production, says the Pronto trucks save four to five hours of labor a day, freeing up drivers to take over loaders and excavators. Otherwise, he says, nothing has changed. “It’s just yellow equipment doing its thing, and you stay out of its way.”

Levandowski recognizes that making rock quarries a little more efficient is a bit of a comedown from his dreams of giant fleets of robotic cars. (Source.)

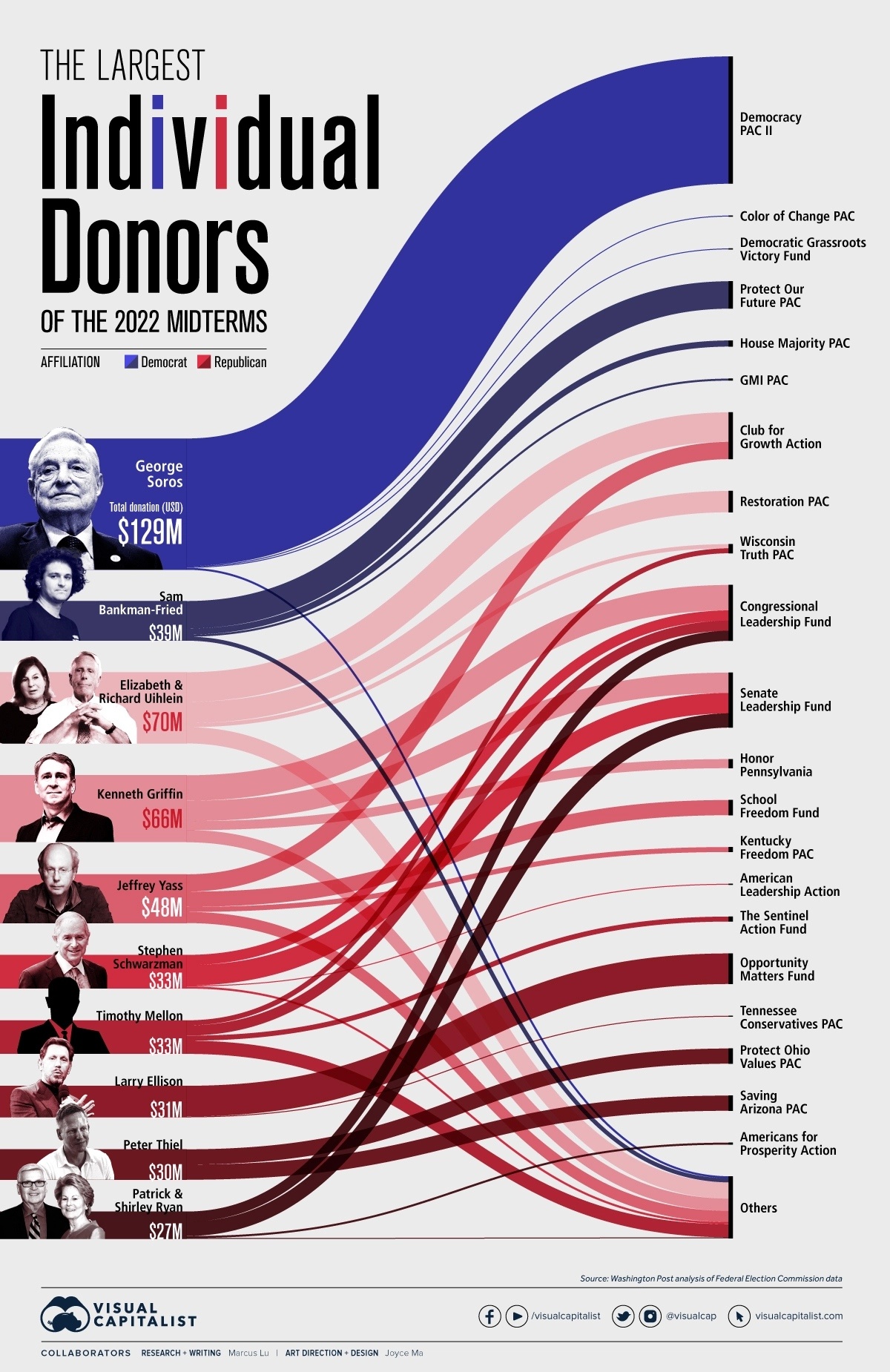

FTX Cryptocurrency Scandal Expands

The crytocurrency scandal surrounding FTX just continues to explode, with so much news coming out each day now that it is difficult to keep up with everything.

I will continue to follow this story and provide updates, but for now here is an excellent 7 minute summary from Really Graceful:

ZeroHedge News had a good update today as well:

FTX Facing Criminal Probe By Bahamas Authorities, But Musk Counters There Will Be “No Investigation” Of “Major Democrat Donor” SBF

At this point it appears that SBF was setup to fail, possibly by the Globalists in a way to bring down existing cryptocurrencies. As I noted in my first article last week when this story broke, this could very well be the beginning of the Great Reset, and a push to implement Central Bank Digital Currencies. To make the way for that, competing cryptocurrencies need to be destroyed, as well as physical currencies and the U.S. dollar.

The investors and traders in the New York Stock Exchanges are still acting like drunken sailors gambling house money in Vegas, but how much longer can that Ponzi scheme keep going before it all falls down, perhaps as quickly as FTX fell?

As to the failed “Way of the Future” Church, the true “way” to the future has not changed.

“Do not let your hearts be troubled. Trust in God; trust also in me. In my Father’s house are many rooms; if it were not so, I would have told you. I am going there to prepare a place for you. And if I go and prepare a place for you, I will come back and take you to be with me that you also may be where I am.

You know the way to the place where I am going.”

Thomas said to him, “Lord, we don’t know where you are going, so how can we know the way?”

Jesus answered, “I am the way and the truth and the life. No one comes to the Father except through me. If you really knew me, you would know my Father as well.” (John 14:1-7)

Source: https://healthimpactnews.com/2022/the-religion-of-the-technocrats-is-failing-as-is-their-technology/