Google and Facebook Working on Artificial Intelligence that Can Dream and Paint Pictures

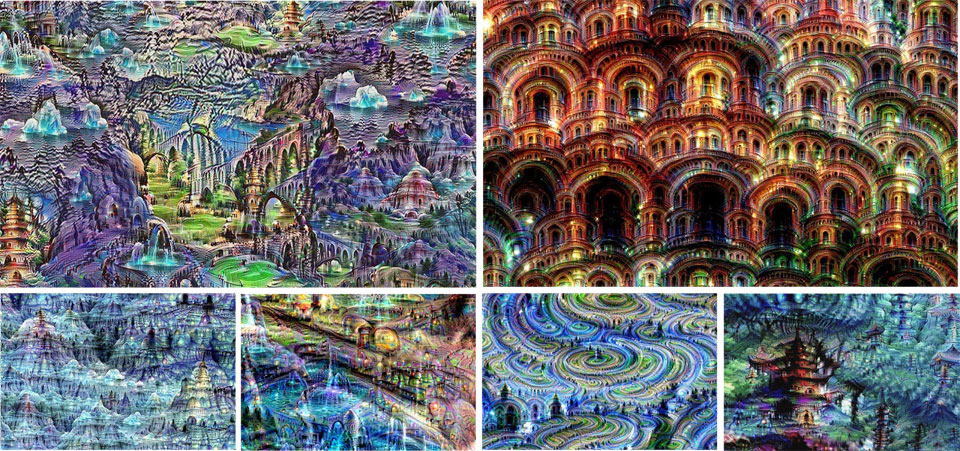

Both these tech giants are building massive neural networks – artificial brains – that can tell the difference between a dog and a car and other objects in a digital image and translate language, recognize the spoken word and even teach a robot how to screw in a light bulb. What happens when you turn these brains ‘upside down’ – they become capable of creating ‘images’ instead of being limited to just recognizing them.

Google is ‘teaching’ its machines to identify similar patterns in an image, enhance those patterns and then engage in the same process again with the same image to create ‘a feedback loop’.

Quick Adsense WordPress Plugin: http://quicksense.net/

Benchmark 300×250 perfect

‘If a cloud looks a little bit like a bird, the network will make it look more like a bird. This in turn will make the network recognize the bird even more strongly on the next pass and so forth, until a highly detailed bird appears, seemingly out of nowhere,” Google wrote in a blog post while explaining the project.

The company calls this technique “Inceptionism”

“Instead of exactly prescribing which feature we want the network to amplify, we can also let the network make that decision,” commented Google’s Alexander Mordvintsev.

Read More